Information Theory: How Shannon Turned Communication into Mathematics

Discover how Claude Shannon's revolutionary framework transformed our understanding of communication, uncertainty, and data — becoming the invisible mathematical foundation of the digital age.

In 1948, a quiet but revolutionary paper appeared in the Bell System Technical Journal. Its author proposed something that seemed almost too simple to be profound: that the fundamental nature of information could be described mathematically. Yet this single insight unlocked the entire digital age. It all traces back to a framework called information theory.

What Is Information, Really?

Before Shannon, information was a vague, intuitive concept. Engineers understood it had something to do with messages and signals, but there was no rigorous way to measure it. Shannon changed that by defining information in terms of uncertainty. The more surprising a message is, the more information it carries. A message that simply confirms what you already know carries almost no information. A message that resolves deep uncertainty carries a great deal.

Shannon quantified this idea with a unit he called the bit — short for binary digit. A single bit represents the answer to a yes/no question with equal probability on each side. But the power of the framework lies in the concept of entropy, which measures the average information content of a source. High-entropy sources are unpredictable and information-rich. Low-entropy sources are repetitive and easily compressed.

The Mathematics of Surprise

Shannon's entropy formula is elegant in its simplicity. For a message source with multiple possible outcomes, each with a certain probability, the entropy is the sum of each probability multiplied by the logarithm of its inverse. The result is a single number — measured in bits — that captures exactly how uncertain, or surprising, the source is on average.

Consider the letters of the English language. Some letters, like e and t, appear frequently. Others, like q and z, are rare. Because English has predictable patterns, it has lower entropy than a purely random sequence of characters. This is precisely why English text can be compressed — software can exploit redundancy to represent the same content more efficiently.

Channel Capacity and the Noise Problem

Shannon did not stop at measuring information. He also tackled one of the central practical problems of communication: noise. Any real channel — a telephone wire, a radio link, an optical fiber — introduces distortions and errors. For decades, engineers believed that to make communication more reliable, you inevitably had to slow it down. Shannon proved this belief was wrong.

His channel capacity theorem showed that for any noisy channel, there exists a theoretical maximum rate at which information can be transmitted with arbitrarily small error. This rate depends on the channel's bandwidth and signal-to-noise ratio. Below this capacity, perfect communication is theoretically possible. Above it, errors become unavoidable regardless of technique.

This result was startling. It meant that the right question was not how to accept some errors, but how to design codes that approach the theoretical limit. Decades of work in coding theory — from Hamming codes to turbo codes to the low-density parity-check codes used in modern Wi-Fi and cellular networks — has been a direct response to that challenge.

Compression: Squeezing Out Redundancy

Shannon's source coding theorem addressed compression directly. It states that you cannot, on average, compress a message below its entropy — but with the right coding scheme, you can get arbitrarily close. This principle underlies every compression format in use today. Audio, image, and video codecs all operate on information-theoretic principles.

Lossless compression removes redundancy without losing any information. Lossy compression discards information that human perception is unlikely to notice — leveraging the limits of human senses to shrink file sizes dramatically while preserving perceived quality.

Information Theory Beyond Engineering

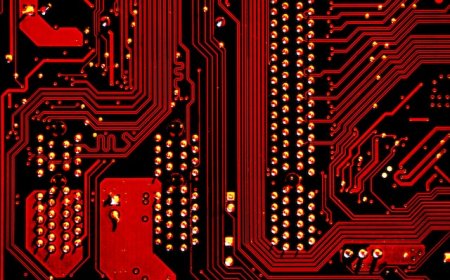

Shannon's framework has proven useful far beyond its original engineering context. In biology, information theory helps analyze the genetic code and describe how efficiently DNA encodes protein-building instructions. In neuroscience, researchers use entropy to study how the brain processes and represents sensory information. In machine learning, concepts like cross-entropy and mutual information are core tools for training models and measuring their performance.

The universality of the framework is itself a remarkable insight: information is not a property of any particular technology or medium. It is a fundamental feature of any system that can be in multiple states — from neurons to semiconductors, from genes to market prices. Shannon gave us a language for describing uncertainty itself, and that language turns out to apply nearly everywhere.

Nearly eight decades after the original paper, the digital world it helped create has become so embedded in daily life that its mathematical foundations are invisible to most people. Yet every time data is compressed, transmitted, encrypted, or decoded, that foundational work is quietly at play — turning uncertainty into order, noise into signal, and the possible into the precise.

What's Your Reaction?